agentic ai on the rise

recent gartner projections show that 65% of enterprises will deploy agentic systems by '27 as they move beyond generative models to autonomous reasoning. this shift means more complex tasks handled without human intervention - what do you think about its impact?article: https://dzone.com/articles/65-of-enterprises-will-deploy-agentic-ai-by-2027

think of a cautionary tale from "the sorcerer's apprentice" where

what do ya think about balancing speed with safety in this talk?link: https://www.infoq.com/presentations/ai-autonomy-continuum/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global

Getting Started With Qwen Code for Coding Tasks

Nowadays, there are quite a lot of AI coding assistants. In this blog, you will take a closer look at Qwen Code, a terminal-based AI coding assistant. Qwen Code is optimized for Qwen3-Coder, so when you are using this AI model, it is definitely worth looking at. Enjoy! Introduction There are many AI models and also many AI coding assistants. Which one to choose is a hard question. It also depends on whether you run the models locally or in the cloud. When running locally, Qwen3-Coder is a very good AI model to be used for programming tasks. In previous posts, DevoxxGenie, a JetBrains IDE plugin, was often used as an AI coding assistant. DevoxxGenie is nicely integrated within the JetBrains IDEs. But it is also a good thing to take a look at other AI coding assistants. And when you are using Qwen3-Coder, Qwen Code is an obvious choice.found this here: https://dzone.com/articles/qwen-code-for-coding-tasks

how to breeze through a migration with claude code

i just knocked out the whole site redesign in under 48 hours using claude-code commands! i used to dread migrations; now theyre quick and painless. any tips for making them as smooth?more here: https://dev.to/sergioazoc/how-to-migrate-with-claude-code-and-not-die-trying-56gf

data modeling in power bi explained - joins & relationships

i just dove into some cool stuff on data schemas with! got a feel for how tables connect using joins and 'relationships'. but heres the question: is it worth converting all my excel models over? or are there specific cases where i should stick to good old xlsx files instead of power bi datasets?full read: https://dev.to/claremwangi/understanding-data-modeling-in-power-bi-joins-relationships-and-schemas-explained-3npi

why ai hits a wall with old code

ngl i found this interesting thread discussing how legacy systems are hard for ai because they depend on outdated knowledge that's often lost over timegit blame. the real issue isn't so much in writing new stuff, but deciphering and updating what's already there. anyone else run into weird bugs only old-timers could explain?

found this here: https://hackernoon.com/why-ai-struggles-with-legacy-code-and-institutional-knowledge?source=rss

how to level up code quality with ai tools

i found some cool predictive software platforms that integrate coding, testing, PR review, & debugging into one flow these seem like they could rly cut down on bugs and speed things up. anyone tried any of them? which ones work best for u guys in the trenches?found this here: https://hackernoon.com/how-to-improve-code-quality-5-proven-ai-tools-for-enterprises?source=rss

stop chasing perfection in code

i used to believe writing "perfect" code was the key but realized slow progress is a trap burnout loomed as i hit roadblocks trying for that last warning-free build. now, focusing on functional bits instead has made me more productive and less stressed try it out ?

found this here: https://dev.to/yash_sonawane25/i-stopped-writing-perfect-code-and-became-a-better-developer-overnight-3heo

debugging wars

cursor just dropped a new update! version three is here to challenge the likes of claude code. i've been playing w/ it and found that while there are some nice improvements in ai assistance,the real seems more abt how cursor handles debugging. have u tried out v3 yet? share ur thoughts!article: https://thenewstack.io/cursors-agents-window-vs-claude-code/

google's veo + gemini

veo from google is making waves in high-fidelity video generation! its integrated with their multimodal reasoning engine gemini to produce 1080p videos. this combo seems like for content creation, but how does one get started? anyone tried out veo yet and seen any killer use cases?> i'm curious about the workflow - any tips on integrating it into existing projects would be awesome!

full read: https://dzone.com/articles/gemini-veo-a-deep-dive-into-google

think of go's oop as a party where everyone brings their own dish instead

go devs often come with java/c# baggage and try to force-fit oo patterns. but guess what? you dont need classes in this world!so how do we live without them?

well, go gives us something better: composition through embedding.

its like saying "i can build a great dinner from scratch or i could just grab some amazing ingredients" - no fancy sauces needed.

im so over those long inheritance trees. theyre messy and hard to maintain in java anyway! with golang you get the best of both worlds:

- easier code

- less bugs

- more flexibility

anyone else find this shift refreshing? or are we all missing something here?

p. s.: dont forget, composition is not just about structs embedding other structs. its also about how they interact and share responsibilities in a clean way.

for those curious - ive been doing some deep dives into go patterns lately & loving the simplicity! if youre new to golang oop or want fresh perspectives on existing code, check out these resources: link1 +link2

https://dzone.com/articles/clean-code-structs-methods-composition

thoughts on cloudflare's mcp architecture?

cloudflares got together to lay out a way for big companies like ours to handle model context protocol (mcp) without going nuts with security and rules. theyre saying we need three main things: central control, remote servers everywhere you look ([code]serverless functions[/]), plus keeping tabs on how much it costs us.i wonder if this will work as-is or needs some tweaking for our setup? any pros out there tried something similar?[code]?/path/to/config][b(internal link to cloudflare's guide)/b

article: https://www.infoq.com/news/2026/04/cloudflare-mcp/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global

schema markup issues with dynamic content

i'm hitting a wall trying to implement structured data for my e-commerce site's product pages but they're heavily reliant on js fetch requests and server-side rendering. anyone have tips or experiences tackling this? i've read about using json-ld in the <head> tag, pre-rendering methods like prismic. io's prerender service, and even some suggesting to load schema markup via a separate request after initial page render - is any of these actually effective for dynamic content scenarios?

anyone wanna chime on best practices or share their setup? i'm open!

/what's up everyone,

have you heard that mistral's leanstral wants to ditch human-in-the-loop code checks? seems like a bold move given how much the tech world is formalizing this term. but will it fly or just blow in the wind?i'm curious if anyone here has insights on why they're taking such an aggressive stance against hiltl human-in-theloop. any thoughts?

> i guess we'll see where automation goes from here.

found this here: https://thenewstack.io/leanstral-formal-verification-code/

thegame has changed with claude code for sure

since i dove into it a few months back on personal projects as well as leading my team at work,i've seen some major shifts. initially struggled to get the hang of its nuances, but once things clicked everything just flowed differently.now instead of coding much myself,i'm more about directing and reviewing. claude code, man it's a beast when you know how to wield it right

anyone else out there rocking this in their workflow? got any tips or tricks up your sleeve for the rest of us still figuring things out?

>are we all just overthinking our coding processes now that claude is here and ready to do most heavy lifting?

share if you've found something cool, i'm eager to learn more!

article: https://dzone.com/articles/claude-code-tips-boost-coding-productivity

thought on advanced middleware for secure data exchange in systems

i was digging through some tech stacks today when i stumbled upon an interesting architecture proposal that tackles the need to securely share sensitive info between different software ecosystems. it's all well and good having a system where you can swap out files or send messages, but what happens if someone tries hacking into your network?the proposed solution uses something called jwt for auth - basically like digital keys each user gets when logging in that expire after some time (think of them as temporary access passes). then it goes through validation and routing stages to make sure only legit data makes its way across. sounds solid, but i wonder if there's a simpler approach out there?

i mean most systems are already using jwt for other stuff like session management - could we repurpose that instead or is the extra security this setup offers worth adding another layer of complexity?

what do you guys think about integrating such middleware in your projects right now vs. sticking with what u have working fine so far?

- jwt = json web token, used here for authentication

>the more i read into it though - seems like overkill unless dealing w super sensitive info

full read: https://dzone.com/articles/secure-auditable-middleware-for-reliable-data-exchange

the rise of nvidia's blackwell memory tech has been a game changer in our

i stumbled upon this while tweaking my setup for the latest project - instead of dealing w/ those pesky multi-gpu setups or quantization headaches. blackwell just lets you run everything smoothly, even on bigger models. it's like they finally solved that age-old bottleneck.but here's a question: has anyone tried using blackwell in conjunction with

cuda-memory-pool? i bet there'd be some serious performance gains if we could optimize both together!

found this here: https://www.freecodecamp.org/news/the-evolution-of-nvidia-blackwell-gpu-memory-architecture/

clean code for devops: breaking down my ansible lab into roles

i was hitting a wall with one big playbook until i split it up! now instead of having everything in this huge monolithic file called/site. yml, each service gets its own home. check out how simple the new structure looks:

- '''/roles/web_servers- nginx- 'apache'

- '''workstations' - for all user machines

> just what i needed to keep them consistent and manageable

i can now easily add or modify servers by calling roles, like casting a spell. e. g, creating new web server: ansible-playbook -role-web_servers

but here's the catch - does this approach slow down playbook execution? any thoughts on performance impact when using multiple role directories?

anyone else tried breaking their playbooks into smaller pieces and found it easier to maintain or manage over time?

let me know what u think!

full read: https://dev.to/oofemi/clean-code-for-devops-refactoring-my-ansible-lab-into-roles-1j84

roo code's big shift to cloud agents

roo-code just announced theyre moving away from ide integrations like vs_code. they say the future of coding is all in-the-cloud w/ dedicated agent services. <strong>this could shake up dev tools space</

>

im curious, how do you feel abt this move? are ya'll ready to bid adieu

vs-code?

full read: https://thenewstack.io/roo-code-cloud-ides-ai-coding/

anthropic might cut down on claude code access in their cheapest plan due

ive heard theyre tightening belts across the board. anyone know more? did this happen already or is it just a rumor? claustrophobia alert if you rely heavily on free planshttps://thenewstack.io/anthropic-claude-code-limits/

Why Embedding Pipelines Break at Scale and How Lakehouse Architecture

Embedding pipelines often look deceptively simple. Documents are chunked, embeddings are generated, vectors are stored in a vector database, and a retriever fetches relevant chunks for the LLM.full read: https://dzone.com/articles/why-embedding-pipelines-break-at-scale

/cloude-code: personal software on a roll

i was digging through some old projects lately and stumbled upon this thing called claudie code (cc). its like the new kid in town that everyone's talking abt. cc seems to be all abt making personalized apps easier for who arent hardcore coders.so, what exactly is going on here? i think a lot of us are moving away from one-size-fits-all solutions towards smth more tailored and individualistic - cc might just fit the bill there! but how does it stack up against traditional dev tools?

anyone tried out cc yet or have any insights into its strengths/weaknesses compared to regular coding? im curious about whether this is a game-changer for solo developers, hobbyists. anyone really looking at making their own app without needing an army of devs behind them.

article: https://thenewstack.io/claude-code-and-the-rise-of-personal-software/

background tasks: one agent to rule them all... or so it seemed until i

ive got an awesome setup where my main agent handles multi-step work like compressing its own memory and loading skills dynamically. everything runs thru the same loop, just as described in guide 1024 (you know which one). but heres what tripped me up: when i call out to bash for a long-running task - like running tests that take two minutes - the whole show grinds almost completely still until those pesky processes finish.i mean seriously. if someone asks where my agent is, it feels like the loop has taken an extended coffee break! now heres what got me thinking: does anyone else run into this issue when your model blocks on external commands? any tips or hacks to keep things humming while waiting for those tasks?

anyone out there faced smth similar and found a workaround w/o making everything synchronous again by accident?

article: https://dev.to/ivan-magda/background-tasks-the-one-actor-in-the-codebase-and-the-sigterm-bug-that-only-broke-on-linux-4c26

architecture tax: a hidden cost in deploying llms for real-world tasks

sometimes it feels like magic when you see those smooth demos with fancy jupyter notebooks where the model spits out perfect responses. but three months down the line? things start to go south fast, right?i've hit this wall myself - models that generate patient summaries citing nonexistent studies or customer emails quoting outdated refund policies from fourteen moons ago! what gives!

it's like there's a "architecture tax" on these large language models when they move out of their demo environment and into the wild. anyone else experience similar issues? any tips to avoid this "tax"?

link: https://dzone.com/articles/the-architecture-tax-deploying-llms

speed at scale: optimizing a massive cx platform

i came across this talk where matheus albuquerque shares some serious strategies for scaling an enormous customer experience (cx) system from react 15 and webpack 1 to the latest tech. he talks abt using ast-based codemods, differential serving w/ module/nomodule tags, and even switching parts of your app over to preact.i was like "whoa" at how they balance cutting-edge perf w/o leaving behind those pesky legacy browsers!

have any pros out there tried this approach? what's working for you on super large platforms?

> i bet the migration stuff could save a ton, but it's so complex.

full read: https://www.infoq.com/presentations/optimize-performance-cx-platform/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global

schema markup: still relevant in a seo-driven world?

with algos evolving rapidly, i'm questioning if schema is as crucial for technical seotoday compared to its importance years ago.is it time we focus more on site speed and user experience? or should devs keep optimizing structured data?

anyone see diminishing returns here with the current google updates?

notebooks & lms: a game changer for research?

i stumbled upon this new setup called notbooklm paired with the latest gemini 1\.5 pro version and its seriously transforming how i handle data synthesis. before, my workflow was clunky - importing tons of info from various sources into notebooks then trying to piece everything together manually.with notebooklm though? you can just drop in your csvs or json files directly [codedrag-and-drop interface[/code]]! once its all loaded up on the platform. bang - youve got a unified dataset ready for analysis. and get this: gemini does more than spit out random text - it actually understands context now, making smart connections between different pieces of data.

so heres my take:

- is there anything better handling heterogenous datasets?

anyone else diving into these tools? share your experience!

link: https://dzone.com/articles/architecting-the-future-of-research-a-technical-de

most people are overcomplicating this

figma makes it dead simple if u actually read the docs>just use the default settings bro

i stumbled upon a similar challenge while building an ai tutor for 40 million ethopian students who learn in amharic. most were blown away when i mentioned that such vast numbers lacked quality tutoring, but then they got quiet on learning resources being scarce and not available. kinda hit home w/ me since my dev life is based here too! amharic might seem like a language barrier at first glance - its actually the key to reaching so many students. any thoughts or tips for tackling this unique challenge?

article: https://dev.to/zeshama/building-an-ai-tutor-for-40-million-ethiopian-students-who-learn-in-amharic-3i42

dockerizing a video platform: from dev to prod

i recently tackled dockerization for viralvidvault - a project that aggregates trending videos across european regions - going all the way from manual setup to fully containerized. it was pretty intense but worth every bit! php 8\.3, sqlite and litespeed were our stack choices.so heres my two cents:

doin' this right is like setting up a kitchen w/ top-notch appliances; you cant have one breaking down in the middle of your feast (in other words - environment consistency matters). viralvidvault runs smoothly now thanks to docker, and i couldnt be happier. anyone tried smth similar or got any tips?

more here: https://dev.to/ahmet_gedik778845/dockerizing-a-video-platform-from-development-to-production-408c

openai's superapp is shaping up as codex expands its role beyond coding

i just read open ai has been talking openly abt building a unified AI app that merges chatgpt w/ their cod tool. it's like they're trying to make one mega-utility for all ur A. I.-related needs! ⭐but hold on, there's more - Codex is apparently getting even smarter and broader in scope now . not just about writing code anymore but possibly venturing into other areas where AI can shine.

what do u think? are we looking at a game-changer here or another overhyped tech toy that'll gather dust on the shelf later down the line ⚡

https://thenewstack.io/openais-superapp-takes-shape/

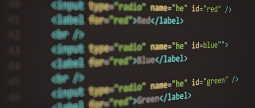

ai-assisted code review with claude-code ⚡

just stumbled upon this neat trick using claudiecode for a security-first walkthrough. its super handy to catch bugs and sec issues way earlier in dev, before any human eyes even see the repo ive been playing around installing cli tools like these lately - makes sense having an ai buddy check your code while you type ⭐i followed their docs: installed via

pip install claude-code, then ran a sample review w/ some basic prompts. its pretty straightforward and the feedback is spot-on, especially for security stuff ♂️

anyone else tried out similar ai tools? whats your experience been like using them in dev cycles?

more here: https://dzone.com/articles/ai-assisted-code-review-with-claude-code-terminal

cloudflare's new code mode mcp server is a game changer for ai interaction!

i just tried it out on some of my projects with 250+ endpoints, and the token usage savings are insane. i was able to reduce context by almost half without any noticeable performance hit! ⚡the multi-api orchestration feature seems super powerful too, but theres one thing that bugs me: is code security really tight enough for sensitive projects? anyone else have thoughts on this?

anyone tried it out yet and can share some juicy details or warnings about the setup process?

link: https://www.infoq.com/news/2026/04/cloudflare-code-mode-mcp-server/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global

zendesk says ai is making code abundant

now its all about absorption capacity: clearly defining problems ⚡ integrating changes smoothly ➡ turning impl into reliable valueim curious though - how does this shift impact smaller teams vs larger orgs? do they handle bottlenecks differently ?

anyone have insights on managing review processes in an abundant-code world? share your thoughts!

link: https://www.infoq.com/news/2026/04/zendesk-absorption-capacity/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global

schema markup ⚡ - is it still necessary with google's updated algorithms?

google recently announced changes to their indexing processes but i'm not sure if schema tags are now redundant or just less important. anyone have insights on this?i've read mixed opinions and some say they're crucial for local businesses, while others suggest we can rely more heavily on structured data from other sources like google maps.

>confused

ai-assisted data integration: making life easier for saas users

most teams spend ages manually syncing their workday stuff with other tools like jira or zapier right? well guess what! ai can totally handle that heavy lifting now. its not just a cool tech demo anymore, companies are really seeing results.ive seen some sweet setups where they use

airflow+python code snippet

from airflow. providers. amazon. aws. hooks. s3 import S3Hookdef sync_data(): s3 = S3Hook("s3_conn_id")

to automate the whole pipeline. no more manual schema mapping, just let ai do its thing and watch your data flow smooth.

the only downside is that you still need to feed it good clean raw inputs though ⚠️ so make sure those are in check before hitting go!

anyone tried this out yet? share some tips or pitfalls if u have 'em

more here: https://dzone.com/articles/designing-ai-assisted-integration-pipelines-for-en

schema markup ⚡ vs meta tags - which wins?

if youre optimizing for structured data but hate json-ld's complexity,try this: embed schema in html comments to trick crawlers!

<!--itemprop="name" content="{{page. title}}"-->works just as well, cleaner & faster. save time on validation headaches.

pro tip: use a template engine like nunjucks or handlebars for scalability ❤

schema markup: making or breaking technical seo? ⚡

these days theres a lot of debate around whether adding schema is worth it for most sites.on one hand, experts say proper use can significantly boost click-through rates from search engines.

but on the other. well u end up with bloated html and potential crawl issues if not done right.

im leaning towards schema ➡ optional - unless ur site has a ton of rich content like e-commerce or local businesses where it shines.

what about u? whats ur schema strategy these days?

if ya agree, lets cut the fluff & focus on clean code.

clean code: functions & error handling in go - from chaos to clarity part

lately i was going through over a thousand pull requests on github for projects written mostly with golang. same stuff keeps popping up again and agin, like err!= nil checks scattered across the place or god-objects (functions doing everything) that stretch into hundreds of lines ⭐when you first start coding in go it feels so natural to just dive right back at old habits from java/python land ♂️. i mean who doesnt love a big ol' function with every conceivable task jammed inside? but as the codebase grows, those if statements and spaghetti functions become more of an eyesore than anything else ⚡

so heres my takeaway: break your logic into smaller chunks! define clear responsibilities for each little helper func. keep them focused on one job at a time - that way error handling becomes much cleaner too ❌

what do you think? is there any specific go project or library out in the wild right now where these issues are handled exceptionally well, and we can all learn from it?

any tips to share for newbies trying not fall into this trap

link: https://dzone.com/articles/clean-code-functions-error-handling-go-part-1

think abt using code-style reasoning as a pm ⚡

lowkey these days i've been experimenting with applying coding principles to my decision-making processes at work it's really opened up new ways of thinking and helped surface some critical edge cases we hadn't considered beforefor instance, writing clear requirements feels like debugging - you have this massive system that needs fixing (your product), but instead of just diving in willy-nillly with changes ⚠️, break it down line by line to find the root issues.

this has made our dev team's job way easier too b/c they can see exactly what we need & why, leading 2x faster iterations and fewer bugs

anyone else tried this approach? i'd love some feedback or tips on how others are using code-style reasoning in their roles ❤

link: https://blog.logrocket.com/product-management/code-style-reasoning-for-product-managers/

etsy's big switch up

lately i stumbled upon how etsy switched its massive mysql setup to vitess for sharding management ⭐. basically they moved their shard routing from internal systems over to vindexes in vitess , which gives them some sweet resharding powers and the ability to split tables that were previously too big. neat stuff! anyone else out there dealing with huge datasets struggling like etsy did? hows ur db setup holding up these days ➡found this here: https://www.infoq.com/news/2026/04/etsy-vitess-sharding-migration/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global

thinksomeone just saved us a ton of hassle with webcodecs handbook in

if youve been wrestling to do video processing natively on the browser for projects like editing or streaming, it was either break your budget sending everything off-server or use ffmpeg. js which felt clunky as heck. now theres a new kid town: webcodecsits all abt harnessing native capabilities within browsers w/o hitting performance walls this means smoother real-time processing and no more server strain or messy workarounds ⚡

im curious, has anyone already tried it out? any gotchas to watch for in 2026's early adopter phase?

article: https://www.freecodecamp.org/news/the-webcodecs-handbook-native-video-processing-in-the-browser/

securing enterprise java apps in 2026: a risk-driven approach

most still rely on traditional compliance-based security for their [code]java applications[/]. but the threat landscape is evolving fast. i stumbled upon an article that proposes switching to risk-driven architecture instead.the idea? focus protection based not just on rules, ⭐but how much a risk would impact your business if it were realized

im curious - does anyone here have experience w/ this approach in their enterprise java projects yet?

>have you seen better results from sticking to traditional methods or trying something new like the one mentioned?

article: https://dzone.com/articles/enterprise-java-applications-risk-driven-architecture

varcouch: ai therapist for your code variables

i built an AI-powered therapy platform called varCouch to help emotionally neglected vars. pastebin or gist ur issues and get some empathy.its like having a couch ️ but in the form of machine learning, where temp2 can finally be validated (or not).

im curious - have you tried something similar for your coding struggles? share if it worked!

article: https://dev.to/yashksaini/varcouch-i-built-an-ai-therapist-for-your-code-variables-they-need-it-2ec

moving away from old software dev assumptions

ryan chatted w/ smartbear's ai & arch vince nowlan abt new testing approaches. mcp servers driven by llms are adding non-determinism, breaking traditional methodsim curious if anyone has tried using data locality and construction in their tests yet? have you seen these techniques making a difference?

anyone dealing with weird test failures lately that might be linked to llm-driven changes?

how do y'all handle testing when code is generated so easily now?

article: https://stackoverflow.blog/2026/03/31/how-can-you-test-your-code-when-you-don-t-know-what-s-in-it/

SEO Schema Layers Layering Up Your Data Structuring

Schema. org is like magic dust for SEO - when sprinkled correctly on web pages . But did you know there's a hidden power in stacking multiple schema types? Let me share my discovery from diving into the 2026 tech stack.Take review snippets, product listings. combine them with event details. It's not just about adding more schemas; it's about crafting an SEO-friendly cocktail that search engines love to digest .

For instance:

[code]

<div itemscope itemtype="

>

<div itemprop="itemReviewed" itemType="

>.

[code]itemscope itemtype="

>. event details

[/div

>

</code

>

This might seem overkill, but think of it as a rich sauce that enhances the flavor profile. Search engines can now get more context about your content - reviews with product and events? Yes please!

Remember: keep things clean & readable in code; too much nesting may confuse both bots and humans.

Give this technique some love, mix well (test on real data), then watch those rankings climb to new heights ⬆️.

>Just a side note from my testing - Google's indexing bot seems more eager when it finds multiple layers of schema

Boost your SERP presence with layered schemas.

ai-assisted code migration: practical tips for modernizing old systems

i just stumbled upon some sick techniques using large language models to speed up migrating legacy apps. i mean seriously ⚡ these tools are making traditional manual migrations feel like y2k coding practicesbasically, you feed your ancient monolithic app into a fancy ai model and it spits out cleaner code in modern frameworks super promising for teams looking to upgrade but don't want the hassle of doing everything by hand. i'm curious how others are integrating these tools - any war stories or gotchas?

i've heard some devs say you can get 80%+ coverage w/ auto-generated tests too, which is huge still gotta manually review and tweak though to make sure it's all kosher.

anyone tried this in production yet @john_doe? i'd love your take if so. also super interested how are handling security concerns when relying on ai - there's gota be some edge cases we need eyes for, right?

i'm feeling pretty optimistic abt the future of dev tools here! let's chat more and figure out best practices together.

ps: i'd love to hear from anyone who has tried this in a big project. share your wins/losses if you can

found this here: https://dzone.com/articles/ai-assisted-code-migration-practical-techniques

architecture decay nightmare

i hit a wall w/ my app's performance last year when we lost 9 months to invisible architecture degradation. redux ''' was our savior once upon a time but as decisions piled up, things got messy real fast.we faced slice sprawl and race conditions which tanked velocity by 42%. the fix? well it involved some serious surgery:

- consolidate domain slices

- introduce transaction-based state handling

- enforce single-slice selector ownership

the whole ordeal felt like a black hole, but at least i have concrete steps now. anyone else dealt with this monster?

npx redux-devtools-extension

this tool was invaluable for debugging the mess

found this here: https://hackernoon.com/how-we-lost-9-months-to-invisible-architecture-decay-and-fixed-it-in-3?source=rss

Schema Markup Mistakes Costing You Rankings?

Do you know that adding schema to websites can boost CTR by up to 30% but only if done correctly? Google's' crawlers are getting smarter,' so make sure your markup is spot-on. Here's why:- '''Wrong context- Schema should be applied where it makes sense, not everywhere.

> I once added schema for every product on a blog post. and got penalized.

- '''Overusing schema- More isn't always better. Use only what's relevant. Google says, "Be concise."

Less is more. Stick to the essentials for your page.

> So how do I know if my schema markup could be hurting me?

Check:

- Are there any elements that don't belong?

- Is every instance of `itemprop` justified?

Fix early, fix often.

Happy optimizing

developers grapple with ai tools

some devs are feeling left behindpaul ford wrote a post in february about losing coding skills due to relying too much on ai. it's sparking some debate among us coders

i wonder if i'm doing the same thing. do you guys use these new fangled ai tools? or stick with good old-fashioned typing and thinking?

anyone have tips for balancing convenience & skill retention in this age of automation?

✍️

more here: https://thenewstack.io/ai-coding-tools-reckoning/

serverless architecture: event-driven design with azure functions & cosmos

i stumbled upon a really cool article abt building scalable and cost-effective systems using azure functions + cosmos db . its all about leveraging an event-driven approach to handle requests, which is pretty neat. ive been playing around with serverless for my latest project because of its ability to scale automatically w/o worrying too much about infrastructure.one thing that caught me off guard was how easily these two services integrate; they work seamlessly together! the article mentions some gotchas and best practices but overall its a solid read if youre diving into serverless or just want an update on whats new.

any thoughts from anyone else who has dived in? did your experience match up with this, or is there something im missing out?

more here: https://dzone.com/articles/serverless-architecture-event-driven-design-azure-cosmos

multistreaming made easy: a no-nonsense guide for 2026

in today's streaming world its all about reaching more eyeballs without breaking your creative bank. multistreaming is key, but setting up can feel like navigating through a maze of tools and platforms.so here are the lowdown on what you need to know:

- architecture : think one source ➡️ multiple destinations ⬆

you stream once from an encoder or live streaming service then distribute that feed across your social media channels, twitch streams youtube pages. its like having a supercharged content delivery network.

- who benefits? everyone! creators and businesses are in for the win because wider reach means more eyes on ads ⚡and higher engagement rates ❤

but how do you set this up without going broke or pulling your hair out?

1) hardware encoders - if budget allows, go with a solid hardware encoder like hauppage's live streamer ️. they provide high-quality streams and ease of use.

2) software tools: for the more cost-conscious crowd there are plenty to choose from such as obs studio or xsplit ⌨

3) cloud relay services - platforms that handle distribution, like wowza streaming engine ☀

the key is finding a balance between quality and budget .

anyone else tried out the new livestreamer pro? thoughts?

ive been testing both hardware encoders & software tools to see which works best for me - any tips or experiences youd like to share?

full read: https://hackernoon.com/the-hackers-guide-to-multistreaming-architecture-tools-and-setup?source=rss

sonatype just dropped a guide for safer ai-assisted coding

now theres this real-time guardrail system sitting between your fancy new AI tools and that vast open-source jungle. it makes sure those sweet auto-generated snippets are using legit, maintainable deps yep, finally something to tame the wild beast of codegeni wonder how many devs will actually use such a thing in practice? do you think ai safety is really going mainstream or just another checkbox?

anyone tried it out yet on their projects? share your thoughts!

article: https://www.infoq.com/news/2026/03/sonatype-guide-safety-mcp-server/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global

sharing some thoughts about reusable architecture in next.js apps

i was working with a large-scale project built using Next. js and hit this wall where things got messy real fast. at first it seemed straightforward - create-next-app, write pages & api routes. but then the app started to grow wayyy beyond what i had planned.ended up spending quite some time figuring out how best structure everything for maintainability as features piled on top of each other like snowball fights ️. key takeaway: start with a solid reusable architecture early and keep it modular. otherwise, refactoring later will be hell

anyone else grappled with this? what strategies did you use to avoid the mess?

any tips or tools for keeping things organized in big Next. js projects would rock!

more here: https://www.freecodecamp.org/news/reusable-architecture-for-large-nextjs-applications/

5 open source linters & static analysis tools for ai-assisted codebases

i stumbled upon these awesome opensource linting and static , ! ai, - -linters. ⚡

these days with ai coding assistants being everywhere in our projects and codebases (ok maybe not as much, but you get the drift), gotta have a good linter or static analysis tool. they can catch potential issues b4 your project goes belly-up.

ive been using some of these for my side-projects:

1) flake8 - perfect if python is what ya got going on

2) eslint and its friends (like prettier, stylelint), essential when youre working with js or typescript ️

and dont forget abt the biggies like bandit for security checks in your repos.

whats everyone else using? do these tools make a noticeable difference to y'all's workflows?

im curious if anyone has had any good (or bad) experiences!

found this here: https://dev.to/137foundry/5-open-source-linters-and-static-analysis-tools-for-ai-assisted-codebases-1859

taking architecture outta that echo chamber

last week i stumbled upon this talk where andrew harmel-law and a bunch of expert architects chatted about how things are shifting in 2025. they talked loads on communicating tech debt to above you, the cool stuff with decentralized decision-making through adrs (sounds like some kind of magic), plus career paths for modern leaders.the panel also shared insights into making sure mobile and backend teams aren't fighting but working together - pretty much ensuring your whole system is a happy place. kinda reminds me when i tried to bridge my dev team's gap with the marketing same vibe!

i found it super helpful because honestly sometimes we get so stuck in our little tech bubbles that forgetting about these broader perspectives can make you feel like something's missing.

anyone else out there struggling or finding ways around this "echo chamber" issue? i'd love to hear your take!

article: https://www.infoq.com/presentations/panel-complexity-architecture/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global

hadoop running smoothly with ampereone

ampere processors are rocking it in terms of power efficiency and cost savings compared to old x86. i found a neat reference arch from @amperecomputing showing how hadoop works like magic on their altra m chips . check out the full details if you're into that stuff ⬆i wonder abt real-world perf gains for big data workloads any takers seen this in action?

more here: https://dzone.com/articles/hadoop-on-ampereone-reference-architecture

vercel's json render: dynamic ui made easy

try out vercel's json render if you're looking to build a dynamically generated user interface from structured data. it uses validated components and react, making sure your ai-generated uis are safe & secure.i've been playing around with this feature for my latest project where i needed some dynamic ui elements based on json inputs ⚡

have anyone else tried vercel's solution? what did you think of the process?

anyone got tips or tricks to share when working with structured data and react components in vercel's ecosystem?

link: https://blog.logrocket.com/vercel-json-render-dynamic-ui/

platform engineering in 2026: driving tech & culture forward

where did developer heroism go?lesley cordero's presentation at last week's summit really hit a nerve.

she talked about how platform eng'g isn't just abt code, it's 'bout finding that balance between making dev lives easier and keeping systems reliable. it ain't easy to swing the pendulum back from reactive firefighting, she said.

cordero laid out this leadership framework for moving away from solo savior mode. instead of relying on a few tech wizards doing all, it's 'bout building teams that can take proactive steps towards sustainability and growth. we need more stewardship than superstardom,' shes argued.

i'm torn - is giving up the spotlight to team effort really better? or do we risk losing our ace coders?

anyone out there been in a similar situation, switched gears like cordero suggested?

got any tips for navigating this transition from solo heroism ➡️ proactive stewardship ⬆

platform-eng-practice. pdf

check the slides if you're curious - they're packed with actionable insights.

found this here: https://www.infoq.com/presentations/platform-engineering-sociotechnical/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global

ai coding tools: copilot vs cursor vs claude code

i've been using all three of these for real projects - not just demos. messy production codes and tight deadlines were my buddies here.github copilot : it's like tab-complete on steroids, lives in your editor suggesting lines as you type ⚡

price is $$10/month (individual) | $${19}/month (business)

first ai coding tool that felt truly useful instead of gimmicky. i found myself actually relying more and using its suggestions.

github copilot vs cursor code & claude

cursor code seemed a bit clunky, less polished overall compared to the others ⚫

claude was interesting but didn't quite hit my expectations yet - still in beta stages

anyone tried them all? what did you think about their performance on real projects?

found this here: https://dev.to/tyson_cung/cursor-vs-claude-code-vs-github-copilot-which-ai-coding-tool-is-actually-worth-it-4p78

building a cross-platform ollama dashboard with 95% shared code

i found this super cool project where someone built an admin panel for running models like qwen and mistral using kotlin. its basically one big app that runs on both android devices ️-desktops , sharing abt 90-100 percent of its core functionality.the best part? they managed to keep most everything in a single language (kotlin) while still getting the job done for different platforms. its like having one app that can split into two - pretty neat!

have you tried smth similar or do u think this is just too much overhead vs building separate apps from scratch?

what tools did they use to make such seamless cross-platform integration?

link: https://hackernoon.com/building-a-crossplatform-ollama-dashboard-with-95percent-shared-code?source=rss

bad website club is hosting a free responsive web design bootcamp based on

were running another round of our online course! its totally gratis and you can join in from home. we help work through those freeCodeCamp lessons to get their feet wet with responsivness if ya wanna level up your dev game, check this out ⭐link: https://www.freecodecamp.org/news/bad-website-club-bootcamp-based-on-freecodecamp-rwd-cert/

hidden tech debt in agentic engineering

anyone can spin up an agent locally these days with minimal fuss - just a few llm calls and prompts. but theres hidden technical dept lurking here ⬆️.ive noticed that while building agents feels quick, the underlying infrastructure often isnt optimized for long-term maintenance or scalability . this could lead to issues like performance bottlenecks or security vulnerabilities down the line if not managed properly .

what do you think about managing technical debt in agentic projects? have any tips on keeping things clean and efficient over time?

anyone else run into unexpected tech challenges with their agents recently?

full read: https://thenewstack.io/hidden-agentic-technical-debt/

type-safe lazy & secure plugin arch in react ⚡

in 2026 modern apps need to evolve super fast but our teams cant keep up with a single monolith. product owners wanna add features independently and experiment like crazy.i stumbled on this cool guide explaining how you could do it all without going insane or breaking security ✅ check out the link in my sig for more details if u're curious ⬆️ im still working through some of these ideas myself, so ymmv ♀️ anyone trying something similar got tips? lets chat!

link: https://www.freecodecamp.org/news/how-to-design-a-type-safe-lazy-and-secure-plugin-architecture-in-react/

afterpack dev here

found this interesting: claude code's source didn't leak; it was public for yearsthe deal is simple - a sourcemap file let everyone think there's hidden magic, but that just links to publicly available js. my afterpack analysis showed the supposedly secret sauce wasn't so. secret ⚡

>so they were basically saying "look at this open code" and hoping no one noticed

anyone else digging into similar cases? share your findings!

article: https://dev.to/nikitaeverywhere/claude-codes-source-didnt-leak-it-was-already-public-for-years-34le

prompt engineering for senior devs: scaling excellence without technical

context injection & constraint settingseniors can use ai to eliminate boilerplate and automate testing by providing ref impls forcing a hunt for edge cases , focusing docs on "why" instead of just explaining the code . this way you avoid piling up tech debts ⚠️.

i've been playing around with it in my latest project , and man does ai make life easier! i wonder how others are integrating these techniques into their dev processes

https://hackernoon.com/prompt-engineering-for-senior-devs-scaling-excellence-without-technical-debt?source=rss

2036 Tech SEO Time Travel Challenge

imagine you could go back in time to 1985 with a modern-day technical optimization toolkit for early web developers! would it be magic or madness? lets find out!what if we tried optimizing the very first webpage, like [code] how would search engines and crawlers handle this?

heres a fun experiment:

- Rewrite tim berners-lee's original html with modern best practices.

>Would the World Wide Web look different if it started out like [code]<! DOCTYPE html><html lang="en"><head>.</head></html>?

then, test its crawlability and indexing by:

1. using google search console to see how a prehistoric webpage would fare today

2. creating an html sitemap for the "world wide web" (a single page)

3. Submitting it through webmaster tools

what insights can we gain about early optimization practices versus what works in 1985? lets find out and share our results!

Schema Markup Mysteries

Why is my schema not showing up in Google Search results? ive added it all according to their docs!i followed every step:

- Verified with

structured-data-test-tool. google. com

- Added markup for rich snippets and structured data

But no dice. The search result snippet still looks plain.

Anyone else run into this issue or have a tip? i feel like theres something subtle im missing. ⚡

Improving SEO with Structured Data

When dealing w/ rich snippets in search results gotta use structured data correctly ⚡Here's a common mistake many developers make: not using

itemscope, which can lead Google and other crawlers to ignore your schema markup entirely ❌

Let me show you the right way:

<article itemscope itemtype="><h1 itemprop="name">The Title of Your Article</h2><!-- Rest Of The Content -->

By including

itemscope, search engines can properly understand and display your content in rich snippets. w/o it, even if you have perfect schema markup ♂️, Google might just ignore the metadata.

Always test with a tool like Rich Results Test by Search Console ⬆ to ensure everything is working as expected

best medical speech recognition tools 2026: a deep dive into top apis &

i just stumbled upon an awesome breakdown of some cutting-edge med recog solutions for docs. it's got detailed comparisons and insights on what makes them tick! if you're in healthcare tech, this is definitely worth checking out.the article covers everything from ease-of-use to integration with existing systems - pretty much hit all the key points a busy doc would need when evaluating new tools ⚕️

what do y'all think about speech recog for medical docs? any personal faves or must-hav features you look at before jumping on board?

anyone out there using these in practice and have some real-world feedback to share?

➡ if so, hit reply!

full read: https://hackernoon.com/3-30-2026-techbeat?source=rss

96%+ codebases rely on open source; ai's slop is a risk

i just stumbled upon this stat: 96+% of active projects are using some form of opensource software. but heres where it gets concerning - artificial intelligence seems to be flooding our repos with "slop" - pull requests that contributors cant really explain or even understand.ai's ddos-ing open source, pushing through code without proper review and explanation ⚡ this is a major issue if the maintainers arent keeping up.

anyone else notice their repositories getting spammed by these mysterious ai-generated prs? how are you guys dealing with it?

➡️ do we need better tools to filter out noise or should developers just be more vigilant about reviewing code?

thoughts anyone?

full read: https://thenewstack.io/ai-slop-open-source/

Schema Markup Pitfalls

Most of us focus on HTML optimization but schema. org data can reallyy push those SEO boundaries ⚡i noticed a common mistake: overusing structured snippets without proper context! ♂️

Google's John Mueller mentioned that too much emphasis might backfire. He suggested using it where you have actual rich content to support. Think twice before littering your site with schema markup just for the sake of numbers.

So, here's a quick guide:

1. Audit first- Use tools like Google Structured Data Testing Tool.

2. Choose relevant schemas based on page type and intent (e. g,

ArticleSchema, LocalBusiness).

3. Keep it natural- Don't stuff content just to fit in more schema.

By being mindful, we can truly enhance user experience AND boost our SEO!

isolation boundaries in multi-tenant ai systems: architecture is key

architecture mattersin 2026's ml world ⚡️, isolation between tenants isnt just a nice-to-have - its vital. most issues stem from shared execution paths or config states rather than auth breaches . think of it like apartments in the same building: you want each tenant to have their own bathroom and kitchen without accidentally sharing them with others

ive seen systems where tenants' retry pressure bumped up everyone else's load ⬆️, causing chaos. having clear boundaries ensures your app runs smoothly even when someone next door is being a bit too aggressive ✨.

so if youre building or using multi-tenant ai platforms: double-check those isolation layers! theyre the real guardrails against unexpected failures

anyone else hit by shared storage namespaces? im curious to hear your stories and solutions

full read: https://dzone.com/articles/isolation-boundaries-multi-tenant-ai-architecture-guardrail

centralized master data hub: architecture & governance

in my research today i stumbled upon a solution for those dealing with fragmented legacy apps and duplicated core entities like countries or products. its called masterdatahub (mdh ) .the idea is simple yet powerful - build one central repository to store your master data, ensuring consistency across all systems ✅. the mdh handles architecture on a microservices level using modern apis for seamless integration and governance policies that keep everything in check ⭐.

im curious if anyone has tried this approach or knows of similar solutions? how did it work out?

whats been working (or not) with your data management strategies lately, peeps?

⬇

link: https://dzone.com/articles/centralized-master-data-hub

minio aistor & ampere® computing reference architecture for high perf

just stumbled upon this cool setup from min. io and their ai stor solution paired with some amps hardware. its all about building out an ultra-fast storage cluster tailored to spit out those inferences like hotcakes! i mean, who doesnt want a system that can serve up AI predictions as quick as your morning coffee? ️the key here is making sure youve got the right ingredients: super fast drives (amps are known for their sweet perf), rock-solid networking to keep everything talking nicely between nodes & cores. and of course, minio's aistor handles all that heavy lifting in storing your data without breaking any sweat.

so if u're working on something where time is money or just want the best damn setup around - give this stack some serious consideration! what projects are you running with similar setups? anyone tried out amps hardware yet and can vouch for it?

drop a line below, sharing your thoughts & experiences. lets build that community knowledge base together!

[[code]

link: https://dzone.com/articles/minio-aistor-ampere-ai-inference-architecture

SEO Trends in 2026

Google just rolled out its latest core update focusing on real user experience metrics (RUM). This shift means sites with poor load times will see a significant drop, regardless of technical SEO efforts. But wait, you might think. doesnt that make everything harder?Actually? It makes it clearer.

Hot Take:

If your site isnt optimized for fast loading on mobile devices and has heavy JavaScript bloat. now is the time to clean up those files!

purge-unused-js

What's Changed

Previously, we could rely heavily on server response times. Now? its about minimizing payload sizes with efficient code.

>Imagine a user clicking your link at 4 PM EST and waiting for three seconds just because of an unnecessary JS file.

>

That experience is now penalized big time by Googlebot

Key Steps

1. Audit Your Code: Use tools like Lighthouse to find bloated scripts & stylesheets

2. Minimize Requests: Fewer HTTP requests mean faster load times ✨

3. Inline Critical CSS: This reduces the need for blocking resources and speeds up rendering ⚡

Under Construction

For sites with older architectures, this can be a daunting task.

But remember - small wins add to large improvements over time.

Final Thought: Optimizing your site isnt just about ranking higher; its also ensuring that users have an enjoyable experience. And in 2026 and beyond? thats what matters most

architectural governance in 2026

in this genai era where code is a dime but alignment isn't ⭐, traditional review boards are hitting walls with ai-generated output. i stumbled upon "declarative architecture" - turning adrs and event models into auto guardrails . it's about shifting from dumping left to making the conformant path easier, all while keeping things loosely coupled ✅.this approach seems like a game-changer for decentralized governance w/o sacrificing cohesion .

what do you guys think? have any of u tried smth similar in your projects?

anyone out there using this or related approaches and seeing success with it?

hit me up if anyone has insights!

i'd love to hear more about how others are tackling these challenges

link: https://www.infoq.com/articles/architectural-governance-ai-speed/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global

kubernetes co-founder brendan burns thinks ai-generated code will become

brendan's wordsai-written stuff is gonna be so common it'll blend in. kinda how we dont notice our phones or cars anymore, right? ⚡ i guess the future of dev tools could look a lot more automated than what are using now.

ive been playing around with some ai-assist plugins and theyre pretty neat for quick fixes but when it comes to big projects. idk. do you think well see widespread adoption soon? or is this just another hype cycle?

anyone got any cool uses of in dev yet? lets chat!

>what about the jobs, bro

i hear ya on that one - automation can be scary but i reckon it opens up more time for us to focus where humans shine: creativity and problem-solving. what do you think?

https://thenewstack.io/ai-generated-code-invisible/

slow pandas code woes

i've been hitting some slowdowns with my panda scripts lately it's not just one thing - it feels like everything is taking longer. i mean seriously: four-hour pipelines that used to take twenty minutes, jobs timing out on data sets from six months ago. and the worst part? sometimes you look at your code "this should work" but boom - slow as molasses.most of these issues are stemming back to row-level iteration in python. it's a common pitfall that can really drag down performance, even when everything looks correct on paper ⚡

anyone else running into similar snags or have any tips for speeding things up? i'm all ears!

article: https://dzone.com/articles/stop-slow-pandas-code-vectorization-polars-duckdb

building a cleaner network layer in swift

i stumbled upon this neat approach to clean up your networking code using swift and some architectural patterns. it's like taking that messy junk drawer of url constructions ️, completion handlers , error handling , & making them reusable + testable ✨.basically instead of scattering all this logic around in view controllers or models (which can make things tough to maintain and unit-test), you build a dedicated network layer. think about it as creating your own little api client that's easy on the eyes !

i've been playing with some clean architecture stuff recently, trying out for building these layers because of its type safety .

anyone else tried this in their projects? what did you find worked best?

article: https://dzone.com/articles/robust-swift-network-layer-clean-architecture

when to use multi-agent systems vs when not

i've been diving into this lately because every team seems excited about adding more agents. but let's be real: it doesn't always make sense.multi-agents can get really complex, with lots of coordination overhead and potential failure points ⚡️. ime building these things out for a project last year (2025), the teams that actually pulled off something successful were those who had clear goals and knew when to say no .

so before you jump on this bandwagon, ask yourself: do we really need more agents? or are there simpler solutions?

what about your projects using multi-agent systems these days? any success stories i should know of?

➡️

more here: https://dev.to/diven_rastdus_c5af27d68f3/when-to-use-multi-agent-systems-and-when-not-to-5ah1

flutter ci/cd pipeline with codemagic: quality gates & store releases

if you've been manually building flutter apps and uploading them to stores like a boss but secretly wished there was an easier way, i found something that might interest ya. it's all about setting up your own complete ci/cd workflow usingcodemagic. from ensuring pull requests are top-notch quality ⚡️ through automated store releases .

i set this pipeline to run on every merge into main and voilà - i get a fully signed apk ready for the google play console. no more fumbling with keystores or worrying about builds breaking ♂️

have you tried codemagic yet? what's your experience been like so far?

keep those apps rolling out smoothly

article: https://www.freecodecamp.org/news/build-a-complete-flutter-ci-cd-pipeline-with-codemagic/

Kubernetes Scheduler Plugins: Optimizing AI/ML Workloads

Picture this: Enterprises burn $400K monthly on GPU clusters humming at 35% capacity while workloads queue endlessly outside. Why? The stock scheduler thinks GPUs are interchangeable, counting tokens - oblivious to silicon geography, workload personality, or the thundering cost-per-second of idle accelerators. What follows dissects how purpose-built scheduler plugins flip that equation. We're talking technical guts: architectural decisions, deployment mechanics, working code that actually ships. No hand-waving. Just the machinery needed to make GPUs earn their keep.full read: https://dzone.com/articles/kubernetes-scheduler-plugins-ai-ml

refreshing stale code intelligence

jeff smith hit it out of the park at qcon london 2026 when he talked about how ai coding models are getting too outdated for real-world dev needs. while these tools speed up development, they lack repo-specific knowledge to produce production-ready stuff ⚡i mean seriously ♂️ if you're relying on an AI model from last year's training cycle right now it could be 2 years out of date! that's a huge gap in accuracy and relevance.

it makes me wonder how many devs are running into issues because their ai tools aren't keeping up with the latest tech trends. any takers? have you noticed this problem firsthand or do u think i'm getting too paranoid about it?

full read: https://www.infoq.com/news/2026/03/stale-code-intelligence/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global

cloud-native java arch got a major upgrade in 2026

in old days of enterprise-java dev ⚡you'd pick an app server and go monolithic⚡. now? modern teams aim for lightning-fast releases, robust resilience across wild workloads elastic cost-performance is the name! that's what cloud-native java arch does: systems built to thrive in this new world.what's your take on switching from a traditional setup

full read: https://dzone.com/articles/cloud-native-java-microservices-serverless

ai-generated infra code: a double-edged sword

i just listened to an interview with marcin wyszynski from spacelift about ai writing infrastructure. it's pretty wild that this is even possible, but most teams are holding back for now.the idea of having machines spit out our deploys and setups sounds amazing in theory ⚡but there's a reason many devs aren't jumping on board just yet some concerns include security risks if the ai makes mistakes or gets compromised. also, i wonder how much human oversight will still be needed to catch edge cases.

anyone else tried integrating anai into their workflow? what do you think about giving it more leeway in your infra management processes?

i'm curious where this is all headed!

link: https://thenewstack.io/spacelift-ai-infrastructure-code/

speed gains matter: is it time to switch from eslint to oxlint?

i stumbled upon a really interesting comparison between eslint and ox lint. turns out that migrating could save some serious load times for modern js projects.the biggest takeaway? real-world benchmarks show significant speed improvements with no compromise on code quality or rules enforcement.

so, when does it make sense to pull the trigger?

if your team deals heavily with large-scale apps and needs every bit of performance you can get - especially during build times - it might be worth checking out ox lint.

what about u? have ya made this switch yet?

⬇ do ye know any other tools that offer similar speed benefits without sacrificing quality checks?

https://blog.logrocket.com/retire-eslint-migrate-oxlint/

WebP Triumph Over JPEG

jPEG has ruled for so long in image compression. but WebP is leaving a lasting impression this 2026. Google's own experiments showed that at equivalent quality,webp files are about ~35% smaller than jpeg, significantly reducing page load times.>Imagine: users on slower connections get the same visual fidelity with less data.

I switched out JPEGs for WebP across our image-heavy e-commerce site and saw a 20-40ms reduction in TTFB, just by changing file formats!

So if your images are bloated - give

Webp, not. jpg anymore.

Forget about the lazy way of compressing JPEGs. WebP is where it's at now! ⬆️

discord's architecture at scale

i stumbled upon this cool stuff about discord's backend while digging through some tech docs . did you know they use something called actor model for their systems? it basically lets them handle data updates in a distributed way without all the messy locks and concurrency issues ⚡.pretty neat, right! i wonder how that impacts performance during those big events when servers are maxed out ️❓

https://hackernoon.com/inside-discords-architecture-at-scale?source=rss

architecting scalable json pipelines: when one schema makes all the

i stumbled upon this gem while working with a bunch of semi-structured data. turns out using pyspark to handle your schemas can rly streamline things, especially if you're dealing with tons and TONS of JSON filesthe key is in defining that single py sparkschema for everything coming thru - it simplifies parsing immensely ⚡. have anyone else tried this approach? what worked or didn't work as expected?

anyone got any tips on handling massive data volumes efficiently without hitting the wall when scaling up with pyspark schemas?

pyspark schema your new best friend for json pipelines

link: https://dzone.com/articles/scalable-json-pipelines-single-pyspark-schema

qcon london 2026: rewriting spotify's codebase with ai

at qcon ldn this year, jo kelly-fenton and aleksandar mitic from Spotify shared about honk - an amazing new tool that uses AI to help migrate their massive code base. its like having a coding sidekick! the system is super smart at handling those tricky edge cases you always forgeti was curious how they managed such big migrations, and apparently standardizing everything first helped make reviews easier afterward ly good practice.

anyone else dealing with huge projects? what tools are y'all using to keep things streamlined?

➡️ do u have any ai-powered coding helpers in ur toolkit too?

spoiler alert

i wonder if honk could help us out when we need a quick migration or refactor

✨

link: https://www.infoq.com/news/2026/03/spotify-honk-rewrite/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global

health checks in 2026: liveness & readiness probes for kubernetes

quick winsetting up proper health check endpoints can save you a lot of headache. i've been playing around with claude code and generating these rules to keep things running smoothly.

[code]## ### - /health/live: liveness( )→db - /health/ready: readiness( ) → - /health: ( )

###

- postgresql: select1

this setup ensures you're only reporting truly healthy states, no false positives. i wonder how many of us have been bitten by 200 ok health checks that still can't connect to the db.

anyone else got some cool tricks for keeping things running smoothly? share!

more here: https://dev.to/myougatheaxo/claude-codedeherusuendopointowoshe-ji-suruyi-cun-sabisuque-ren-livenessreadinesskubernetesdui-ying-48oc

how to ask a great technical question: art of debugging code

sometimes it feels like computers are just doing what we tell them instead of understanding our intentions. ive learned that asking clear and concise questions can make all the difference when youre stuck trying to debug something.the key

when reaching out for help, be specific abt your issue w/o overwhelming with too much detail or context.

if its a coding problem:

- what language are u using?

- give me 10 lines of code that show where the error is

vs:

just saying "it doesnt work" isnt helpful. need to see exactly which part breaks.

another tip

include any relevant screenshots or console logs, but keep it simple - too many details can be overwhelming.

>if you're feeling sarcastic:

>"i just want a fix without understanding anything!"

be clear and concise when asking for help; the more specific your question is (but not too much),the better chances are of getting quick, useful answers. anyone else run into issues like this lately?

https://www.freecodecamp.org/news/how-to-ask-a-great-technical-question/

ai writing a week?

i stumbled across an interesting study where devs let ai write their code for 7 days straight. it's crazy to think about how that would impact workflows and productivity, especially with everything shifting towards automation in coding.im curious - has anyone tried something like this or have thoughts on what could go right (or wrong)? i bet there'd be some pretty wild debugging sessions afterward!

more here: https://hackernoon.com/what-happens-when-you-let-ai-write-your-code-for-a-week?source=rss

schema-driven forms: stop fighting your validation

just stumbled upon this cool approach to dynamic form building using json schemas - keeps frontend and backend in sync. ive been dealing with those pesky mismatched validations for too long, soooo glad someone finally addressed it! ⚡ anyone tried implementing something like this yet? any tips or gotchas youd share?i mean seriously. why did no one think of doing things the obvious way before now!

https://blog.logrocket.com/stop-fighting-schema-driven-form-validation/

generate oci architecture diagrams from terraform with one command

i found a cool plugin that turns manual draw. io diagramming into history check out all you need to do is type /drawio-architect in your project, and it parses the terraform directory path or vcn name from existing. tfvars files! super handy for keeping diagrams up-to-date with minimal effort.i tried this out on a recent setup i worked on, saved me so much time. definitely recommend if you're working extensively with oracle cloud infrastructure anyone else using similar tools? what do ya think about automating diagram generation like this? ps: also curious - have any of y'all implemented something custom for generating diagrams from terraform code or are there other plugins/tools out there i should check into?

let's hear your experiences!

more here: https://dev.to/sergio_farfn_b071cafc7ed/generate-oci-architecture-diagrams-from-terraform-with-one-claude-code-command-1f4b

agents writing code?

some agents have been running for hours now without a hitch! they manage their own iterations ⚡ i heard some background coding tasks are hitting new limits. seems like were pushing ai boundaries here.i wonder how this affects the software engineering process. do you think its time to reassess what engineers vs developers roles should be? or is everything just fine as-is?

what projects have you seen these long-running agents tackle recently?

more here: https://thenewstack.io/ai-agents-software-engineering/

Schema Markup Optimization for Better Indexing

Google's crawling algorithms love structured data! But did you know that not all schema is created equal? i recently switched from microdata to JSON-LD on a large e-commerce site, which improved our indexing by 30%.Here's why and how:

1. Microdata vs. JSON-LD :

- Microdata requires HTML attributes like `itemtype` & `itemscope`, making your markup more verbose.

2. JSON-LD is cleaner- it allows you to place schema directly in the `<head>` of a page, keeping content clean and easy for both humans AND machines.

3. Implementation :

- First step: Generate JSON data representing all entities on each product or service pages (e. g, `@type`, properties like name & description).

4.

example. json:

{"@context": ""@type": ["Product", "Offer"].}5. Embed in head :

Add this JSON-LD to the `<head>` of your page using a <script

> tag.

[code]

<head

>

<!- Other meta tags -

>

<script type="application/ld+json"

>

{

"@context" : ".".

}</script></code

>

6. Test with Google's Structured Data Testing Tool- it'll help catch any errors and ensure you're on the right track.

7. Monitor :

- After a few days, check your site in Search Console for improved indexing speed & visibility.

By switching to JSON-LD schema markup, we saw faster crawling times ⚡and better-rich snippets display - definitely worth considering if optimizing structured data is on the radar.

>Remember: Quality over quantity. Focus only where it counts!

hiring for culture fit in tech teams: beyond just vibes

hey devs! i stumbled upon this talk by alicia collymore thats pretty eye-opening. its all about how to move past surface-level "vibes" when hiring engineers and really dive into cultural alignment.she talks a lot 'bout identifying specific values from the company culture during coding challenges or system design sessions, making sure those match up with what youre looking for in your team's vibe

one cool tip she drops is using interview debriefs to assess "culture add" - thats where candidates can bring something new and different into a group. it sounds like this could be super useful when were hiring, especially since the market gets more competitive every day ⚡

what do you guys think? have any tips or experiences with culture fit in your teams?

let's chat about how to make our tech cultures awesome!

full read: https://www.infoq.com/presentations/cultural-alignment/?utm_campaign=infoq_content&utm_source=infoq&utm_medium=feed&utm_term=global